CMSI 2210: Welcome to Week 04

This Week's Agenda

For this week, here's the plan, Fran…

- Announcements

- Homework 03 due Wednesday/Thursday

- Quiz next week

- Character Encoding

- Character Sets

- BCD, EBCDIC, and ASCII

- Unicode

- Other Character Sets

- Ways to Encode Characters

- About Strings

- More About

C

- Wednesday/Thursday: In-class Exercise

Character Encoding

In computer concepts, a character is a unit of information in textual data, which has a

name associated with it. Some easy examples, of course, are the alphabet we are used to using, in which

each of the symbols, or letters, is usually called a character

. However, there are LOTS of other

characters, which go by names such as:

- PLUS SIGN

- CYRILLIC SMALL LETTER TSE

- CHEROKEE LETTER TLO

- BLACK CHESS KNIGHT

- PITCHFORK

- MUSICAL SYMBOL FERMATA BELOW

A grapheme is a minimally distinctive unit of writing in some writing system. It is what a person usually thinks of as a character. However, it may take more than one character to make up a grapheme. For example, the grapheme:

R̊

is made up of two characters (1) LATIN CAPITAL LETTER R and (2) COMBINING RING ABOVE. The grapheme:

நி

is made up of two characters (1) TAMIL LETTER NA and (2) TAMIL VOWEL SIGN I. This grapheme:

🚴🏾

is made up of two characters (1) BICYCLIST and (2) EMOJI MODIFIER FITZPATRICK TYPE-5. This grapheme:

🏄🏻♀

is made up of four characters (1) SURFER, (2) EMOJI MODIFIER FITZPATRICK TYPE-1-2, (3) ZERO-WIDTH JOINER, (4) FEMALE SIGN. And this grapheme:

🇨🇻

requires two characters: (1) REGIONAL INDICATOR SYMBOL LETTER C and (2) REGIONAL INDICATOR SYMBOL LETTER V. It's the flag for Cape Verde (CV).

Finally, a glyph is a picture of a character [or grapheme]. Two or more characters can share the same glyph [e.g. LATIN CAPITAL LETTER A and GREEK CAPITAL LETTER ALPHA], and one character can have many glyphs [think fonts, e.g., A A A and so on…]

Investigation: Go find out from the Internet [a

good place to start might be codepoints.net] what you need to know

to answer the question,

What glyph can represent the three characters LATIN SMALL LETTER O WITH STROKE,

DIAMETER SIGN, and EMPTY SET.?

Then draw your own copy of it.

Investigation: Go find out from the Internet what you need to know to answer

the question, What two characters would you use to make the Spanish flag?

Character Sets

A character set, then, is a set of these characters that are linked by some important unifying characteristic[s]. It has two parts: (1) a repertoire, which is the characters which make up the set, and (2) a code position mapping, which is a function mapping a non-negative integer to EACH INDIVIDUAL character in the repertoire.

As usual, the term mapping means a one-to-one correspondence between these

two values. For example, if the value 65 is set up to correspond to the character

, that is the kind of mapping referred to here. Thus, another term

for you to know is, when an integer Uppercase Latin Ai maps to a character c

we say i is the code point of c.

A character set, then, can also be considered a set of code points.

BCD, EBCDIC, and ASCII

Three older examples of character sets are BCD [which stands for Binary-Coded Decimal], EBCDIC [which stands for Extended Binary Coded Decimal Interchange Code], and ASCII [which stands for American Standard Code for Information Interchange].

BCD is the oldest of the three. It is a way of representing decimal numbers in which each digit is encoded into a specific fixed number of bits. Usually, either four or eight bits are used, with four being the default since that is all that are required. Note that BCD is specifically used for numeric digit values, NOT for letter characters, and as implied by the name, it encodes decimal digits.

The main value of BCD is that it is more easily human readable than normal binary, because each four bits

[or tetrade, another word for nybble

] only handles one digit. Since the values

only go from zero to nine, the bit patterns correspond to the binary tables we saw last week. BCD was a

part of most operating systems and processor instruction sets up until modern times, but with the advent

of modern processors such as the ARM and later versions of x86 it is no longer used. It is still useful

to know, however, because there are hardware chips that use the encoding.

This link will give you more information, and this table shows the encoding scheme.

| Because in most computers the smallest unit of data storage is one

byte, there is a possibility for a lot of wasted space with BCD. For example, the number

Packed BCD also allows for signed values, but the sign is put at the END instead of at the

beginning, and consists of four bits, as shown in the | ||||||||||||||||||||||||||||||

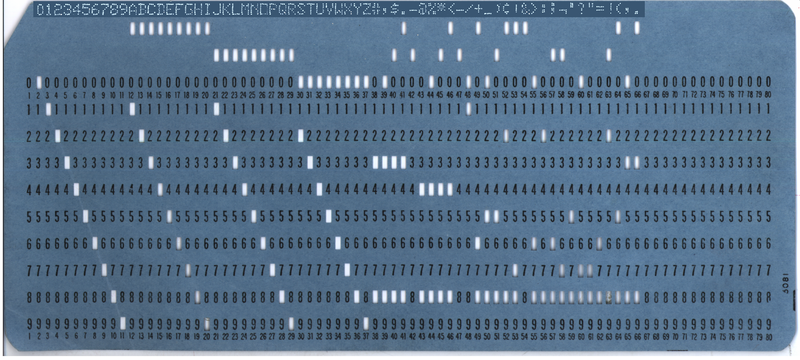

EBCDIC is probably one of the oldest ways of encoding characters so that digital

computers can handel them. It started in 1963 – 1964, and was invented by IBM for use on its

System/360 mainframe computers [which should give you a heads-up on something else all good computer

scientists should know about, the book called The Mythical Man Month

by Fred Brooks, who was

the chief system architect of that system].

EBCDIC is an 8-bit encoding scheme, which was created to extend BCD and its follow-on, BCDIC, which were originally six-bit codes. These two encodings were used to help insure the physical integrity of punched cards, which at the time were used to imput both code and data into the mainframe computers. All IBM mainframe computers used EBCDIC as the basic encoding scheme for many years. In fact, there is actually an EBCDIC-oriented Unicode Transformation Format called UTF-EBCDIC proposed by the Unicode Consortium, which is designed to allow EBCDIC software to handle Unicode! |

|

ASCII is, apart from the modern Unicode sets, the most well-known character encoding scheme. It was also developed primarily by IBM, and actually its development was in process during the same period that the EBCDIC set was in work.

| ASCII was originally a 7-bit coding, but over time, since the standardization of 8-bits for

the byte and the need for more than 128 characters, it turned into an 8-bit code. The first

32 characters of ASCII are the so-called Another useful artifact of ASCII is that several programming languages [such as Java] treat individual characters as synonymous with their ASCII code points. In such cases, you can actually add and subtract numeric values from the characters, so they can be used as array indices and for other such operations. |

Unicode

Another example of a character set is Unicode. Here is part of its code point mapping [note that code points are traditionally written in hex]:

25 PERCENT SIGN — % 2C COMMA — , 54 LATIN CAPITAL LETTER T — T 5D RIGHT SQUARE BRACKET — ] B0 DEGREE SIGN — ° C9 LATIN CAPITAL LETTER E WITH ACUTE — É 2AD LATIN LETTER BIDENTAL PERCUSSIVE — ʭ 39B GREEK CAPITAL LETTER LAMDA — Λ 446 CYRILLIC SMALL LETTER TSE — ц 543 ARMENIAN CAPITAL LETTER CHEH — Ճ 5E6 HEBREW LETTER TSADI — צ 635 ARABIC LETTER SAD — ص BEF TAMIL DIGIT NINE — ௯ 13CB CHEROKEE LETTER QUV — Ꮛ 2023 TRIANGULAR BULLET — ‣ 20A4 LIRA SIGN — ₤ 2105 CARE OF — ℅ 213A ROTATED CAPITAL Q — ℺ 21B7 CLOCKWISE TOP SEMICIRCLE ARROW — ↷ 2226 NOT PARALLEL TO — ∦ 2234 THEREFORE — ∴ 2248 ALMOST EQUAL TO — ≈ 265E BLACK CHESS KNIGHT — ♞ 1D122 MUSICAL SYMBOL F CLEF — 𝄢 1F08E DOMINO TILE VERTICAL-06-01 — 🂎 1F001 SQUID — 🀁 1F0CE PLAYING CARD KING OF DIAMONDS — 🃎 1F382 BIRTHDAY CAKE — 🎂 1F353 STRAWBERRY — 🍓

Because characters can have multiple glyphs, Unicode lets you represent characters in your program code

unambiguously with U+ followed by four to six hex digits [e.g. U+00C9,

U+1D122]. You can also represent them using HTML [as you can see from the code on this web

page] by putting the prefix &#X

followed by the hexadecimal code point, and ending with a semicolon.

[You can also use the equivalent decimal value by converting the hex and dropping the X

...]

How many characters are there?

How many characters are possible with Unicode? The powers that be have declared that the highest code point they will ever map is 10FFFF, so it seems like 1,114,112 code points are possible. However, they also declared they would never map characters to code points D800..DFFF, FDD0..FDEF, {0..F}FFFE, {0..F}FFFF, 10FFFE, 10FFFF [2114 code points]. So the maximum number of possible characters is 1,111,998.

How many characters have been assigned [so far]? See this cool table at Wikipedia to see how many characters have actually been mapped in each version of Unicode. [BTW, if you don't peek, Unicode Version 10.0 has 136,755 characters mapped, so there is a lot of room to grow.]

Where can I find all of them?

Please see the complete and up-to-date code charts, with example glyphs of every character. If you would like a much easier way to browse the characters [and why wouldn't you?], check out the beautiful charbase.com and codepoints.net. [Charbase and Codepoints are run by volunteers, so they may not be up to date.]

Blocks

The code points are not assigned haphazardly. They are allocated into blocks. There are around 300 blocks. Make sure to see the official blocks file at Unicode.org. If you need a little preview, here is a little slice of that file:

0000 — 007F Basic Latin

0080 — 00FF Latin-1 Supplement

0250 — 02AF IPA Extensions

0400 — 04FF Cyrillic

0F00 — 0FFF Tibetan

2200 — 22FF Mathematical Operators

2700 — 27BF Dingbats

3100 — 312F Bopomofo

10860 — 1087F Palmyrene

12000 — 123FF Cuneiform

1D100 — 1D1FF Musical Symbols

1F000 — 1F02F Mahjong Tiles

F0000 — FFFFF Supplementary Private Use Area-A

100000 — 10FFFF Supplementary Private Use Area-B

Categories

Every Unicode code point is assigned various properties, such as name and category. The complete list is beyond the scope of this discussion, but you can fine out more about them from the Internet.

Combining Characters, Modifiers, and Variation Selectors

It's common to use multiple characters to make up graphemes. This is often done to put marks on letters, modify skin tones, modify genders, and create more complex graphemes. Examples:

| ş̌́ | U+0073 LATIN SMALL LETTER S U+0327 COMBINING CEDILLA U+030C COMBINING CARON U+0301 COMBINING ACUTE ACCENT | [Note that this looks different on different browsers…] |

| 👶 | U+1F476 BABY | baby |

| 👶🏻 | U+1F476 BABY U+1F3FB EMOJI MODIFIER FITZPATRICK TYPE-1-2 | baby: light skin tone |

| 👶🏾 | U+1F476 BABY U+1F3FE EMOJI MODIFIER FITZPATRICK TYPE-5 | baby: medium-dark skin tone |

| 👶🏿 | U+1F476 BABY U+1F3FE EMOJI MODIFIER FITZPATRICK TYPE-6 | baby: dark skin tone |

| 👩⚖️ | U+1F469 WOMAN U+200D ZERO WIDTH JOINER U+2696 SCALES U+FE0F VARIATION SELECTOR-16 | woman judge |

| 👩🏿🔬 | U+1F469 WOMAN U+1F3FF EMOJI MODIFIER FITZPATRICK TYPE-6 U+200D ZERO WIDTH JOINER U+1F52C MICROSCOPE | woman scientist: dark skin-tone |

| 🤽🏾♀️ | U+1F93D WATER POLO U+1F3FE EMOJI MODIFIER FITZPATRICK TYPE-5 U+200D ZERO WIDTH JOINER U+2640 FEMALE SIGN U+FE0F VARIATION SELECTOR-16 | woman playing water polo: medium-dark skin tone |

See all the emojis at Unicode.org.

Investigation: Research and describe the function of VARIATION SELECTOR-16. How is it different from VARIATION SELECTOR-15?

Where can I get a list of all the fun stuff?

Awesome Codepoints is super cool.

Other Character Sets

Unicode is really the only character set you should be working with. However, other character sets exist, and you should probably know something about them.

ISO8859-1

ISO8859-1 is a character set that is exactly equivalent to the first 256 mappings of Unicode. Obviously it doesn't have enough characters, but it also maps nicely to ASCII for backward compatibility.

ISO8859-2 through ISO8859-16

These 15 charsets also have 256-character repertoires. They all share the same characters in the first 128 positions, but differ in the next 128. Again ASCII and backward compatibility. Details at http://www.unicode.org/Public/MAPPINGS/ISO8859/.

Windows-1252

This character set, with a repertoire of 256 characters, also known as CP1252, can be found at http://www.unicode.org/Public/MAPPINGS/VENDORS/MICSFT/WINDOWS/CP1252.TXT. It is very close to ISO8859-1. Be careful with this set! Users of Windows systems often unknowingly produce documents with this character set, then forget to specify it when making these documents available on the web or transporting them via other protocols with tend to default to Unicode. Then the end result is annoying. It's best to avoid this.

ASCII

The last thing about ASCII is that is exactly equivalent to the first 128 mappings of Unicode. Obviously it doesn't have enough characters for EVERYTHING. However it is commonly used, because many Internet protocols require it! It is a common subset of many character sets and something most people can agree on. Here is a link to an ASCII table.

A Quick Quiz to Test Your Knowledge

|

Open a browser and navigate to this site: https://kahoot.it/.

Enter the game PIN number in the box and click |

Ways to Encode Characters

A character encoding specifies how a character [or character string] is encoded in a bit string. Character sets with 256 characters or less have only a single encoding: they encode each character in a single byte [so in some sense the character set and the character encode are about the same]. But there are, naturally, many encodings of Unicode. The most important are UTF-32, UTF-16 and UTF-8.

UTF-32

This is the simplest. Just encode each character in 32 bits. The encoding of a character is simply its code point! Couldn't be more straightforward. Of course, you try to convince people to actually USE four bytes per character.

There are actually two kinds: UTF-32BE [Big Endian] and UTF-32LE [Little Endian]. Examples:

| Unicode Character | UTF-32BE | UTF-32LE |

|---|---|---|

| RIGHT SQUARE BRACKET [U+005D] | 00 00 00 5D | 5D 00 00 00 |

| CHEROKEE LETTER QUV [U+13CB] | 00 00 13 CB | CB 13 00 00 |

| MUSICAL SYMBOL F CLEF [U+1D122] | 00 01 D1 22 | 22 D1 01 00 |

Note that every character sequence can be encoded into a byte sequence, but not every byte sequence can

be decoded into a character sequence. For example, the byte sequence CC CC CC CC does not

decode to any character because there is not code point CCCCCCCC in Unicode.

UTF-32

The Good: It's fixed width! Constant-time to find the nth character in a string [provided you care].

The Bad: It's pretty bloated.

UTF-16

In UTF-16 some characters are encoded in 16 bits and some in 32 bits.

| Character Range | Bit Encoding |

|---|---|

| U+0000 ... U+FFFF | xxxxxxxx xxxxxxxx |

| U+10000 ... U+10FFFF | let y = X-1000016 in 110110yy yyyyyyyy 110111yy yyyyyyyy |

Note that all characters requiring 32-bits have their first 16 bits in the range D800..D8FF. Pretty slick, right? Those code points are never assigned to any character in Unicode.... How perfect, dontcha think?

There are actually two kinds: UTF-16BE and UTF-16LE. Examples:

| Unicode Character | UTF-16BE | UTF-16LE |

|---|---|---|

| RIGHT SQUARE BRACKET [U+005D] | 00 5D | 5D 00 |

| CHEROKEE LETTER QUV [U+13CB] | 13 CB | CB 13 |

| MUSICAL SYMBOL F CLEF [U+1D122] | D8 34 DD 22 | 22 DD 34 D8 |

UTF-16

The Good: Nothing is good about this encoding.

The Bad: Variable width, almost always uses more space than UTF-8, even for East Asian scripts, people.

The Ugly: It's what JavaScript thinks characters are. Facepalm.

UTF-8

Here's another variable length encoding.

| Character Range | Bit Encoding | Number of Bits |

|---|---|---|

| U+0000 ... U+007F | 0xxxxxxx | 7 |

| U+0080 ... U+07FF | 110xxxxx 10xxxxxx | 11 |

| U+0800 ... U+FFFF | 1110xxxx 10xxxxxx 10xxxxxx | 16 |

| U+10000 ... U+1FFFFF | 11110xxx 10xxxxxx 10xxxxxx 10xxxxxx | 21 |

Examples:

| Unicode Character | UTF-8 Encoding |

|---|---|

| RIGHT SQUARE BRACKET [U+005D] | 5D |

| LATIN CAPITAL LETTER E WITH ACUTE [U+00C9] | C3 89 |

| CHEROKEE LETTER QUV [U+13CB] | E1 8F 8B |

| MUSICAL SYMBOL F CLEF [U+1D122] | F0 9D 84 A2 |

And yeah, you never have to care about big-endian or little-endian with UTF-8.

UTF-8 absolutely rocks. The number of advantages it has is stunning. For example:

- ASCII text is unchanged in UTF-8.

- Non-ASCII characters are never coded with ASCII characters.

- C programmers that have assumed that the octet 00 terminates a string won't have to change their code [UTF-16 is full of these things!].

- Western languages can represent text in about 1.1 bytes per character—big savings over the other UTFs.

- When decoding you can always determine if you've ended up in the middle of a multibyte encoding [no encoding starts with 10].

- The number of leading ones in the first byte tells you how many bytes the character needs.

- Encoding and decoding are done with shifts and logical bitmask operations, no division, so processing is very fast.

- You can [lexicographically] sort by simply pretending each byte is a character.

- The octets FE and FF never appear, so mass confusion about byte-order marks and end-of-file-markers improperly processed by lazy C programmers never occurs.

Here's Tom Scott's overview of UTF-8:

You should also read the UTF-8 Everywhere Manifesto.

NOrmalization

Here are two character sequences:

- U+0065 LATIN SMALL LETTER E, U+0300 COMBINING GRAVE ACCENT — è

- U+00E8 LATIN SMALL LETTER E WITH GRAVE — è

In some sense they should represent the same text, right? In fact, they only differ in that the second

sequence has a pre-composed character. Since the character sequences are different, we can make get them

to compare equal only if we normalize them. Unicode defines four NORMALIZATION

FORMS: NFD, NFC, NFKD, and NFKC. These forms are used

to interpret unicode and decompose the character sequences so that they can be handled properly for things

like searching and comparing. For example, normalizing the two strings given above [given the proper

normalization form] will compress the first one into the second one. This saves space and also allows

searching/comparing for things like the tilde-n sequence.

About Strings

How hard can this be? Well, do you know the answers to these two simple questions?

- What is the length of a string? Oy. Maybe it is the number of graphemes? Or the number of characters? Or the number of bytes when encoded as UTF-8? Or the number of code units in a UTF-16 encoding? Does the BOM count at all? Oh and wait: do we count pre-composed characters as one character or multiple characters? or…… AAAAAAAARRRRRRRRGGGGGGGGGGHHHHHHHHHHHHH!!!

- When are two strings equal? Yes there is the whole nastiness of whether we have reference equality (same string object) vs. value equality (same value), but what the heck does it mean to compare strings by value? Should we normalize first? If so, which normalization format should be use?

Well, it's hard. But if you've read this far, you at least are now better able to debug problems.

But wait, think about this a bit. Maybe the fact that these questions are hard means these are the wrong questions. Really. Consider:

- Why do you care how long a string is? You should really only care about (1) how many bytes of storage are needed for it, or (2) how many pixels or characters wide your the rendered string is going to take up. The number of characters is quite often irrelevant!

- Why are you comparing strings for equality anyway? Are you checking passwords? YOU BETTER BE HASHING THOSE, so then you will be comparing byte sequences or integer numbers! Are you looking up dictionary keys, or doing a search for something? In these cases, printable, normalized characters will be just fine. (Use normalization when building the search index and when parsing the query string.)

Aren't these problems with strings widely known? Well, Edaqa Motoray has written about this. He says programming languages dont't need a string type at all. And in fact, if your language does have one, it is probably badly broken. It helps, if you want to get a handle on all this, that strings and text are not the same.

Further Reading

Unicode is a huge topic. The standard is over 1,000 pages long. There is a ridiculous amount to learn. These notes have barely scratched the surface. Maybe you would like to go deeper. Maybe you would like to know everything there is to know.

Start with these articles, and follow links within them as well:

- Introduction to Unicode by Mathias Gaunard

- A Programmer's Introduction to Unicode by Nathan Reed

- Dark Corners of Unicode by Eevee

Got weeks of free time? You can read the entire one-thousand page Unicode standard.

And Now For Something Completely Different… More about C

We have seen the basics of a C

program from the previous exercises. This week we'll go a step

further, and get into some details that you will need so that you can understand the code you'll be

seeing and writing during the semester when you do your homework sets.

Because Java was originally based on C

, the syntax is very similar. The if statement,

the for loop, the while loop, the switch statement, as well as

things like break, continue, and other keywords are all [pretty much] the

same in C

as you see them in Java. Further, the languages are both statically typed, which is

another similarity. Also, variable declarations are as you would expect, such as int i = 23;

or double d; or long l = 32547619845;. All statements end with a semi-colon,

as you would expect, and curly braces, square brackets, and parentheses all work the way you think. So

do all the operators.

The differences arise when you begin to look at things like Strings and Arrays. What we usually treat

in Java as objects

can't be handled the same in C

. Further, Java handles the objects

internally by treating them as entities that the JVM will manage for you, but in C

the

programmer has to take on the task of that management herself. As an example, here are a couple of

code snippets:

|

// inC#define MY_SYMBOLIC_CONSTANT 23 char s[] = "This is a test string"; int myRA1[10]; int myRA2[5]; int i = 0; for( i = 0; i < 5; i++ ) { myRA2[i] = i + 1; } printf( "String s contains: %s", s ); for( i = 0; i < 10; i++ ) { myRA1[i] = i * 2; } |

Note several things here. Pick this code apart.

There are quite a few differences shown here, which also shows you why programmers that have cut their

teeth on Java and other nice

languages are loath to return to those thrilling days of yesteryear

when we had to handle a lot of things on our own. Nevertheless, THIS IS WHERE

MUCH OF THE POWER OF THE C

LANGUAGE COMES FROM, because the programmer has the flexibility

to do a LOT of things that Java won't let her do.

Another Small Example

Here is some code from the K&R book that shows something we did in CMSI 2120. We implemented a program to copy a file. Here is something similar, that copies one character at a time from its input to its output:

#include <stdio.h>

int main( int argc, char * argv[] ) {

int c;

c = getchar();

while( c != EOF ) {

putchar( c );

c = getchar();

}

}

// Because C

has expressions like Java, we can write this as:

// NOTE: this is just showing two ways of doing the SAME THING for illustration

#include <stdio.h>

int main( int argc, char * argv[] ) {

int c;

;

while( (c = getchar()) != EOF ) {

putchar( c );

}

}

Note several things here. Pick this code apart.

A Bit More Sophistication…

In Java, sub-program units

are called methods

. In C

, as in JavaScript, they are

called functions. A function provides a convenient way to perform some

computation, and once the function is tested and shown to operate properly, it can be used over and

over again without worrying about its internals. All that is needed at that point is for the person

using the function to know its calling signature. As we've seen, there are

a large number of functions that are defined for us, which are part of the C standard library

.

Here is a function that provides one of the methods of Java's Math.pow() method, along

with the test code for it:

#include <stdio.h>

int power( int m, int n );

int main( int argc, char * argv[] ) {

int i;

for( i = 0; i < 10; i++ ) {

printf( "%d %d %d\n", i, power( 2, i ), power( -3, i ) );

}

return 0;

}

int power( int base, int n ) {

int i, p;

p = 1;

for( i = 1; i <= n; ++i ) {

p = p * base;

}

return p;

}

Note several things here. Pick this code apart.

But Hey, Mr. K&R… What About Strings!?

The string is arguably one of the most used arrays in the c

language. Yes, strings are treated

as arrays, in this language, specifically an an array of characters. Also,

they are a one-dimensional array, linear, as you'd expect. However, what you DON'T expect is

that you have to do the management of the string yourself. The special character \0

is used to

mark the end of the string, so you need to have the string dimensioned one

character longer than the maximum length of the string to account for the space required. Here is

one last program for this week, which does a string manipulation. We'll write several functions for

this effort, and put them all into the same source file. We'll pick it apart to mine the gold. See if

you can figure out just what this code does.

#include <stdio.h>

#define MAXLINE 100

int getline( char line[], int maxline );

void copy( char to[], char from[] );

/* main program - what does this actually *DO*?? */

int main( int argc, char * argv[] ) {

int len; /* current line length */

int max; /* maximum length seen so far */

char line[MAXLINE];

char longest[MAXLINE];

max = 0;

while( (len = getline( line, MAXLINE )) > 0 ) {

if( len > max ) {

max = len;

copy( longest, line );

}

}

if( max > 0 ) {

printf( "%s", longest );

}

return 0;

}

/* read a line into string s, and return the length */

int getline( char s[], int lim ) {

int c, i;

for( i = 0; ((i < lim - 1) && ((c = getchar()) !- EOF) && (c != '\n')); ++i ) {

s[i] = c;

}

if( c == '\n' ) {

s[i] = c;

++i;

}

s[i] = '\0';

return i;

}

/* copy values in "from[]" into "to[]"; assume that "to[]" is big enough! */

void copy( char to[], car from[] ) {

int i = 0;

while( (to[i] = from[i]) != '\0' ) {

++i;

}

}

Note several things here. Pick this code apart.

In-class Exercise #3 Carried Over From Last Week

Learning outcomes: The student will learn more about 1) the way values are encoded in

real life situations; 2) that there is such a thing as Morse Code; and 3) the NATO phonetic alphabet

in case they want to use the real

words for things like NO, I mean S as in

Sam…

Your task this week is to work through the following encodings and write a short program for each one. Feel free to use any language you are familiar with, whether it be Python, Java, JavaScript, TypeScript, or anything else you want. Here are the problems:

Write a program which takes in a word or phrase and produces a listing to the terminal window which

uses the standard NATO phonetic alphabet words for each letter. For example, entering the word

hello

will produce the listing:

HOTEL

ECHO

LIMA

LIMA

OSCAR

....and the phrase "blah blah" will produce:

bravo

lima

alpha

hotel

bravo

lima

alpha

hotel

There is no distinction required for upper or lower case letters, so you may treat them however you

want. For a complete listing of the NATO alphabet, see the web page at

this link.

I have provided you with starting code in my 'classwork04' folder in my GitHub repository at this link .

The Strength of C

… Pointers!

One of the strongest parts of the C

language is the ability to access the address

of a variable, not just its value. In Java we don't have this ability, because we only either have

primitive types which are allocated on the stack, or we have REFERENCE variables which are

allocated on the heap. However, in C

we can at any time specifically gain access

to the address in memory of a variable. There are two operators which

facilitate this, the star or asterisk, and the and or ampersand

operators. Notice that these two things are overloaded. Star is also used for multiplication, and

ampersand is also used for logical ANDing operations. The compiler has to use the context of the

code to determine which you mean, but the compiler is very good at this, and will also tell you if

it doesn't understand or if it thinks you've screwed up.

The star operator is even more overloaded, based on the context in which it is used. We will see an example of this in a minute.

This facility involves what is commonly known as pointers.

Pointers and memory management are considered among the most challenging issues to deal with in low-level programming languages such as C. It is not that pointers are conceptually difficult to understand, nor is it difficult to comprehend how we can obtain memory from the operating system and how we return the memory again so it can be reused. The difficulty stems from the flexibility with which pointers let us manipulate the entire state of a running program. With pointers, every object anywhere in a program's memory is available to us — at least in principle. We can change any bit to our heart's desire. No data are safe from our pointers, not even the program that we run — a running program is nothing but data in the computer's memory, and in theory, we can modify our own code as we run it. (Mailund, 2021)

If V is a variable, let's say an int, then to get the address of where

V is in memory we use the unary operator & and write &V. This

is read the address of V

; since the unary operator is right-to-left associative, the

compiler will determine that you mean the address of V. Addresses can be declared in

programs, as well. The corresponding thing, the pointer, is declared in the program and can then be

used to obtain an address as a value. The declaration int *p; declares p

to be a variable of the type pointer to

. In a certain sense, the

star operator is the inverse of the ampersand operator in this context.

int

Because of the way arrays are stored in memory, the name of an array is treated as the pointer to

the first location in memory of that array. Further, since strings in C

are treated as an

array of characters, the name of a string is treated as the pointer to the first location of the

string. This can be a bit confusing when it is first encountered. The in-class exercise for this

week will give you some [optional] practice with pointers, addresses, strings, and arrays.

If p is a pointer, then the direct value of p is a memory location and

the vlaue of *p is the value at the memory location which is

stored in p. Suppose we declare:

double x, y, *p;

…then the two statements:

p = &x;

y = *p;

…are equivalent to the statement:

y = *&x;

…which in turn is equivalent to the statement:

y = x;

The following short program should help to illustrate the distinction between a pointer value and its dereferenced value:

#include <stdio.h>

int main( int argc, char * argv[] ) {

int i = 17;

int *p;

p = &i;

printf( "\n The value of i is %d\n", *p );

printf( "\n The location [address] of i is %d\n\n", p );

}

Here is another example for you that prints the values for you to see them:

#include <stdio.h>

int main( int argc, char * argv[] ) {

int i = 23;

int *p2i = &i;

int **p2p2i = &p2i;

printf( "\n The value of i is %d\n", i );

printf( " The value of p2i [the address of i] is %d\n", p2i );

printf( " or using 'p' specifier: %p\n", p2i );

printf( " The value of p2p2i [the address of p2i] is %d\n", p2p2i );

printf( " or using 'p' specifier: %p\n", p2p2i );

}

When we run this code, we get:

The value of i is 23

The value of p2i [the address of i] is 6422216

or using 'p' specifier: 0061FEC8

The value of p2p2i [the address of p2i] is 6422212

or using 'p' specifier: 0061FEC4

Notice that when we want to print the actual address value we can use %p

as the format specifier

in the printf() function call, and it will print the value in hex automatically!

Here is a link to

a document with more information about pointers which I have put into my repository. Check the link

to PointerDiagrams.docx

or PointerDiagrams.pdf

.

Now, why on earth would we need to know the address of a variable? There

are a couple of reasons. We'll see more of this in the coming weeks, but for now you should know it

has to do with the way arguments are passed to functions in C

.

When you call a function and pass an argument, the value of that argument is put on the stack, and is then retrieved off the stack INSIDE the function, so it is a copy of the value that is used inside the function. This is known as pass by value. When the function returns, the original valur of the argument is restored in the caller [actually it has never changed].

If we WANT to change the value [what is sometimes known as side effects

], we have to pass the

address of the variable, meaning we pass a pointer

to the variable, so that when it is operated on inside the function, the original value gets changed.

Here is some code to demonstrate:

#include <stdio.h>

int func1( int value ) {

printf( " Inside func1, value is %d\n", value );

value += 17;

printf( " Inside func1, after addition, value is %d\n", value );

return 0;

}

int func2( int *value ) {

printf( " Inside func2, value is %d\n", *value );

*value += 17;

printf( " Inside func2, after addition, value is %d\n", *value );

return 0;

}

int main( int argc, char * argv[] ) {

int i = 23;

int *p2i = &i;

int **p2p2i = &p2i;

printf( "\n The value of i is %d\n", i );

printf( " The value of p2i [the address of i] is %d\n", p2i );

printf( " or using 'p' specifier: %p\n", p2i );

printf( " The value of p2p2i [the address of p2i] is %d\n", p2p2i );

printf( " or using 'p' specifier: %p\n\n", p2p2i );

printf( " Before call to func1, i = %d\n", i );

func1( i );

printf( " After call to func1, i = %d\n", i );

printf( " Before call to func2, i = %d\n", i );

func2( &i );

printf( " After call to func2, i = %d\n", i );

}

In-class Assignment #4

Learning outcomes: The student will learn more about 1) the concept of addressing; 2)

how values are stored in memory; 3) how values can be accessed by their memory addresses; 4) how the

idea of a pointer

and associated pointer math can be used to access memory in a type-dependent

fashion.

For the exercise, write a program called goFish.c that sums and averages a list

of numbers, then creates a string and produces a count. You must use EITHER an array, or a set of

pointers as the data storage. The program's specifications are as follows:

- The numbers must be prompted for in a loop

- The special value

-9999

is used to indicate that the entries are complete - Store the values in a structure of some kind that is initially declared to be size 25

intelements - Loop through the structure and sum the elements, then output the sum to the console

- Count the number of elements in the structure, and output their average to the console

- Loop through the structure again, and contatenate all the values into a long string

- Output the string to the console

- Loop through the string, and count all the

7

[seven

] characters; this is like in the gameGo Fish

when you ask another player,GIMME ALL YOUR SEVENS

. - Output the count to the console

Homework Assignment #3

Homework number three is due Wednesday/Thursday this week. Links to all the homework assignments are available on the syllabus page, but this reminder is just to make sure you know…

Week Four Wrap-up

That's probably enough for the this week. Be sure to check out the class links page to see what's in the pipeline for next week.