CMSI 2210: Welcome to Week 02

This Week's Agenda

For this week, here's the plan, Fran…

- Announcements

- No class on Monday this week ~ Labor day

- I know it's not fair to the Tue-Thu section 😧

- It's actually mostly unfair to the Mon-Wed section 😱

- Depends on your point of view;

skipping

class ormissing

class

- Computer Systems Organization

- Von Neumann Architecture

- Processor Parts and Organization

- Layers of Organization

- Assembly Language

- Topics in Computer Systems

- Computer Systems in Popular Culture

- Beginning Analysis of Number Systems

- Wednesday/Thursday: In-class Exercise:

Computer Systems Organization

|

In this course you will become familiar with all the parts that make up a computer. Of course, there is the Central Processing Unit or CPU, but there are many more parts in the box than just that as you know. We'll learn about many of them and their functions, both individually and as part of the system. Part of any computer system is the input and output hardware which allows the computer system to

actually be a part of the We will also look INSIDE the CPU to see how things function, and how

this amazing device can do so many things so well. We'll deconstruct if from its highest view

to its lowest level to see just what's going on |

You can view the computer system as a set of layers, with each layer working on the actions of the one

beneath it. Here's an example diagram:

|

As can be seen in this version, a system can be viewed to have seven layers. Granted this is a simplified view, but the idea is apparent. Each layer builds upon the functionality that is in the layer below it, and provides functionality to the layer above it. In this diagram you can see at each level the type of function that is provided as well as some

of the typical things that are used to implement those functions. For example, at the lowest

level is the actual digital logic, the hardware that

all the operations of the computer In the layer above that, the control layer, uses the hardware and contains the microcode which uses the hardware from the layer below it. Note that microcode can be done using internal software [also sometimes known as firmware], or it can be realized using hardwiring of components in the system. In this course, we will be learning in some detail about the digital logic, control, machine, and assembly language layers, and will use both the assembly language and high-level language layers to facilitate that investigation with real-life examples. | ![thanks to Computer Organization and Architecture [2012] Null & Lobur p. 32](../private/compsyslayers.jpg)

|

Discussion: Are there any other parts to a computing system? What do you think they are? Would you consider them to be part of one of the system layers or something separate? Where does a human user of the system fit ~ is she part of the top layer of the model or is she on top of the entire model?

Von Neumann Architecture and Layers of Organization

Those of you who have taken CMSI 185, CMSI 186, and CMSI 281 have no doubt heard the name of John Von Neumann, the guy who is credited with the concepts of the modern computer and the computer architecture that bears his name. In those other classes, I have reduced the idea to three steps, which is a VERY simplified way of looking at it.

Quick Quiz: Does anyone remember what those three steps are?

![thanks to Computer Organization and Architecture [2012] Null & Lobur p. 32](../private/vonneumannarch.jpg)

|

In the diagram you can see the basic parts of a computer system. Each block in the diagram shows a collection of major components, and each of those collections has a HUGE number of components, literally thousands of them. These parts comprise what is known as a stored-program computer. This architecture provides the following things:

Briefly, the architecture runs programs using the following steps:

|

|

Another, expanded, view of the system looks like the diagram at the right. The same components

are shown, but with the addition of a system The data bus is used to carry the bits that comprise data that need to be transferred between the CPU and main memory or the CPU and the input/output controller. This data is accessed using… The address bus which is used by the CPU to access other parts of the system. The CPU will place an address consisting of a set of bits, onto the address bus to let the system know where the data it needs is expected to be. Finally, the control bus lets the various parts of the system interact in a controlled way, by raising or lowering specific lines on the bus. The fact that the control bus implements alternating between the instruction and execution operations causes what is known as the Von Neumann Bottleneck. | ![thanks to Computer Organization and Architecture [2012] Null & Lobur p. 32](../private/vonneumannarchmod.jpg)

|

|

By the way, Von Neumann is know for a LOT more than just the stored-program computer architecture. Here are some other things he was involved in or responsible for:

|

Devices and Peripherals and Software ~ More of the System

In addition to the basic parts as outlined above, there are many other things that connect to the system to help it function in a meaningful way. These are collectively referred to as devices and they come in three basic flavors, as you can see in the following table:

| Devices | ||

|---|---|---|

| Input Devices | Output Devices | Storage Devices |

| Keyboard Mouse Light Pen Joystick Joyswitch Trackball Tablet Track Pad Surface Digitizer Microphone Voice Recognizer Scanner Fingerprint Scanner Card Reader Paddle Game Controller Data Glove Wand Video Camera Eye Tacker Motion Sensor | Screen Television Printer (2D or 3D) Plotter Film Recorder Projector Hologram Generator Robot Arm Speaker Headphones Voice Synthesizer Card Punch | Disk Drive CD Drive DVD Drive USB Flash Drive Solid State Drive (SSD) Tape Drive |

Quick Quiz: Can you think of others? What have I missed here?

Software can be though of as split between Applications software and Systems software, as shown in the following table:

| Applications Software | Systems Software |

|---|---|

| Written for people | Written for computers |

| Deals with human-centered abstractions like customers, products, orders, employees, players, users | Deals with computer-centered concepts like registers and memory locations |

| Solves problems of interest to humans, usually in application areas like health care, game playing, finance, etc. | Controls and manages computer systems |

| Concerned with anything high-level | Concerned with data transfer, reading from and writing to files, compiling, linking, loading, starting and stopping programs, and even fiddling with the individual bits of a small word of memory |

| Is almost always device or platform independent; programs concentrate on general-purpose algorithms | Deals with writing device drivers and operating systems, or at least directly using them; programmers exploit this low-level knowledge |

| Is often done in languages like JavaScript, Perl, Python, Ruby, Lisp, Elm, Java, and C# that feature automatic garbage collection and free the programmer from low-level worries | Is often done in assembly language, C, C++, and Rust where programmers have to manage memory themselves |

| Is done in languages that generally have big fat runtime systems | Generally feature extremely small run-time images, because they often have to run in resource constrained environments |

| If done properly, can be very efficient: good garbage collection schemes allow much more efficient memory utilization than the usual memory micro-management common in C programs | If done properly, can be very efficient: you can take advantage of the hardware |

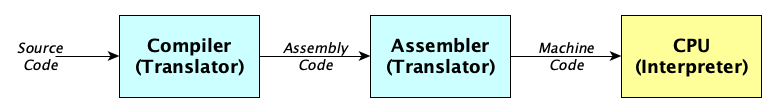

Also in terms of software, there are different levels of programming languages: High-level languages, Assembly Languages, and Machine Languages.

- A machine language is what a processor runs.

It's pure binary. - An assembly language has instructions that map one-to-one to machine language

instructions.

It's made of mnemonics. - A high-level language uses far more abstract concepts to describe computations.

It's human readable.

Often, people write in a high-level language, then run that source code through a compiler which translates the code into assembly language, which an assembler then translates into machine language:

Here's an example. Start with this C++ function:

long example(long x, long y, long z) {

if (x > y) {

return x * y - z;

} else {

return (z * y) * y;

}

}

The compiler produces this assembly language:

_Z7examplelll:

cmp rdi, rsi

jg .L5

imul rdx, rsi

mov rax, rdx

imul rax, rsi

ret

.L5:

mov rax, rdi

imul rax, rsi

sub rax, rdx

ret

which becomes this in machine language [hexadecimal code on the left, binary code on the right]:

4839F7 0100 1000 0011 1001 1111 0111

7F0C 0111 1111 0000 1100

480FAFD6 0100 1000 0000 1111 1010 1111 1101 0110

4889D0 0100 1000 1000 1001 1101 0000

480FAFC6 0100 1000 0000 1111 1010 1111 1100 0110

C3 1100 0011

4889F8 0100 1000 1000 1001 1111 1000

480FAFC6 0100 1000 0000 1111 1010 1111 1100 0110

4829D0 0100 1000 0010 1001 1101 0000

C3 1100 0011

Discussion: Which language level would you rather program in, and why?

Processor Parts and Organization

As software developers, one important fact we ALWAYS need to remember is that ANYTHING THAT

CAN BE DONE WITH SOFTWARE CAN ALSO BE DONE IN HARDWARE!

. Why, then, isn't EVERYTHING done

in hardware?

Quick Quiz: Any guesses?

The fact is that hardware runs faster, is more consistent in its operation, and is less prone to systemic errors in most cases than software. This makes it a prime candidate for implementing all kinds of things that could be done in software.

|

However, it takes more time and much more engineering rigor to develop hardware, and it is often orders of magnitude more expensive to correct mistakes. If a mistake is made in hardware, there are a number of ways to fix it, ranging from simple parts substitution all the way up to redesigning and remanufacturing a circuit board. With software, in most cases a developer can make a few simple changes to the code and rebuild the program quickly and easily. Here

is an article that illustrates this point; if you look about halfway down the page you'll see a

description of some of the steps involved in creating the hardware for the F-14 Tomcat Fighter

Plane [the plane used in the movie But because of the stored program computing approach, the two rely on each other for proper

operation. Hardware relies on its |

There are several parts inside the CPU that are involved in the processing:

- Control Unit: This part directs the operation of the processor, sort of the brain of the operation. It directs the operation of the other units by providing signals that control the timing and ordering of their operations.

- Arithmetic Logic Unit [ALU]: This part of the processor performs operations done on integers, including mathematical and bit-wise operations. The data inputs for the ALU may come from registers on the CPU or from outside the CPU in RAM, ROM, or other devices. They may even be from constants that the ALU generates inside itself. These input are generally called operands.

- Address Generation Unit [AGU]: This part calculates the addresses used by the CPU to access the main memory of the computer. Since address calculations must be done for every look up operation, it can add clock cycles to every operation. Having a separate address calculation module offloads that functionality, providing faster operation of the CPU overall.

- Memory Management Unit [MMU]: This part [which may be external but tightly coupled

to the CPU] translates logical addresses calculated by the AGU into the physical addresses in RAM.

It also provides

paging

andmemory protection

facilities to support multi-programming and virtual memory operations. - Cache Memory [Cache]: This is a special type of memory that is small, fast, and is

close to the core of the processor. It is useful to store frequently used data which has been

previously fetched from RAM, on the premise that what was used recently will be used again soon.

There are several types of cache, the most common of which are

L1

,L2

, andL3

.

Assembly Language

Programmers are better off for learning assembly than they are not learning it. For one thing, it gives you a more intimate and hands-on study of computer systems as you can see from the following quote:

Assembly is a mechanism by which a programmer can learn details of computer hardware, CPU components, memory organization, and the interactions among these elements of computer architecture.

— Brian Hall and Kevin Slonka

Other reasons:

- Knowing the hardware instruction set may help you make better decisions in high-level language programming [you'll have a good sense of optimization].

- You'll learn lots of interesting things you can do with bit manipulation.

- You'll learn how arithmetic really works, and how function calls, and closures, and loops, and conditionals, and even parallel computation, really work.

- You may need to write software for device drivers, embedded systems, or software VMs.

Topics in Computer Systems

Thanks to Dr. Toal, here are some things to study to get both breadth and depth about computer systems and systems programming, both concepts and real-world examples:

| |

|

|

|

| |

A Quick Quiz to Test Your Knowledge

|

Open a browser and navigate to this site: https://kahoot.it/.

Enter the game PIN number in the box and click |

Beginning Analysis of Number Systems

In order to start understanding how this all works, we need to start with the way computers store, handle, and recognize things, and how they can represent these things to their human counterparts. This means, first of all, we need to understand how computers deal with numbers.

Why numbers? Why not letters or words or sentences?

So first of all, numbers are just symbols. The numbers we are used to, zero through nine, are what is

known as the decimal system

of course. It is based on the idea of groups of ten, probably because

we have ten fingers. Interestingly, the idea of zero is a relatively new concept. We'll see more about

that next week.

But again, the NUMBERS ARE JUST SYMBOLS FOR A CONCEPT. The computer treats EVERYTHING as a set or a string of ones and zeros. The computer's CPU DOESN'T CARE ONE WHIT whether the ones and zeros represent a number, a letter, a sentence, a floating point value, or anything else — it treats them all the same! This is a very important concept for you to wrap your head around and remember for later.

So back to base-10, we humans can use combinations of any of these ten symbols to represent any numeric

value. Each successive number place

to the left of the one we're looking at is exactly one order

of magnitude [one power of ten] greater that the one immediately to its right. You know them as the

one's place, ten's place, hundred's place, and so on.

For now, though we need to be aware that decimal

or base 10

is different from the way a

computer handles numbers. Digital computers are based on the binary

system, which only has two

states: ON and OFF, which we replace with one

and zero

. Don't worry if

it seems abstract and difficult to understand how a computer can use only two symbols and do all of the

fantastic things it can do. What's important at the moment is that you are aware of the idea of the

place values

of the binary system.

Where in the decimal system, each decimal digit can have any of ten values 0 – 9, each binary

digit [which we contract to call a bit

] can have only one of TWO values, 0 – 1. But like

the decimal system digits, each of the number place values

is based on a value of TWO rather

than TEN.

So, looking at the number 1234 for example, we have a 4 in the one's place, a 3 in the ten's place, a 2 in the hundred's place, and a 1 in the thousand's place. Remember, though, that these are all powers of ten. For example, the ten's place is 101 and the hundred's place is 102. If we view the number in terms of powers of ten, we get something like:

1 x 103 + 2 x 102 + 3 x 101 + 4 x 100

Another way to show this would be:

| Place | 1000's | 100's | 10's | 1's |

|---|---|---|---|---|

| Power | 103 | 102 | 101 | 100 |

| Value | 1 | 2 | 3 | 4 |

Notice that as we move from right to left, the exponents keep increasing by one. This is important.

Now, let's turn to the binary system. In this case, the number BASE is different, so instead of powers of TEN, we have powers of TWO. Let's expand the table and replace the number base to see what it would look like in that system:

| Place | one-twenty-eight's | sixty-fours' | thrity-two's | sixteen's | eight's | four's | two's | one's |

|---|---|---|---|---|---|---|---|---|

| Power | 27 | 26 | 25 | 24 | 23 | 22 | 21 | 20 |

| Value | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 |

So now for a simple example. Let's take an 8-bit value [which is known as a byte

] as follows:

| Place | one-twenty-eight's | sixty-fours' | thrity-two's | sixteen's | eight's | four's | two's | one's |

|---|---|---|---|---|---|---|---|---|

| Power | 27 | 26 | 25 | 24 | 23 | 22 | 21 | 20 |

| Value | 1 | 0 | 1 | 1 | 0 | 1 | 0 | 1 |

If we do the math, we'll find we have:

(1 x 27) + (1 x 25) + (1 x 24) + (1 x 22) + (1 x 20)

…which is:

128 + 32 + 16 + 4 + 1 = 18110

In-class Assignment #2 — Running the Bases

Now, in class, get with your partner and work out the following for practice. The better you get at the ability to translate between bases, the easier it will be to understand the different ways that numbers are represented in the computer. Next week we'll start out with an easy way to convert from decimal to binary, but for now see if you can figure out a way on your own. Feel free to use the Internet for any assistance you can get. Here are the problems:

- Convert 1012 to decimal

- Convert 10101012 to decimal

- Convert 01010102 to decimal

- Convert 111111112 to decimal

- Convert 11001100110011012 to decimal

- Convert 111111111111111111112 to decimal

- Convert 1111000011112 to decimal

- Convert 1000000000002 to decimal

- Convert 1010101111001101111011112 to decimal

- Convert 542110 to binary

- Convert 8010 to binary

- Convert 1234510 to binary

- Convert 1510 to binary

- Convert 6553510 to binary

- Convert 3276710 to binary

Homework Assignment #2

Reminder: homework02 is due NEXT WEEK ON WEDNESDAY/THURSDAY. All homework assignments are available from the syllabus page and also from the assignments page, but just to make sure you remember …

Week Two Wrap-up

That's probably enough for the this week. Be sure to check out the links to the related materials that are listed on the class links page.